The Short Answer: By Design, Not by Accident

Snapchat's disappearing message feature was marketed as fun and spontaneous. But its practical effect — the elimination of a permanent message record — is precisely what makes it dangerous for minors. Predators know this. Research by the Thorn organization found that Snapchat was among the top three platforms used by predators to contact child victims, with its disappearing content specifically cited as a reason for choosing the platform.

"Snapchat's disappearing message feature is not a neutral design choice. It is a system that destroys evidence of abuse — and Snap Inc. has known this for years while continuing to operate the platform with inadequate protections for minors."

— Composite of allegations from multiple Snapchat child exploitation civil lawsuits, 2024–2026

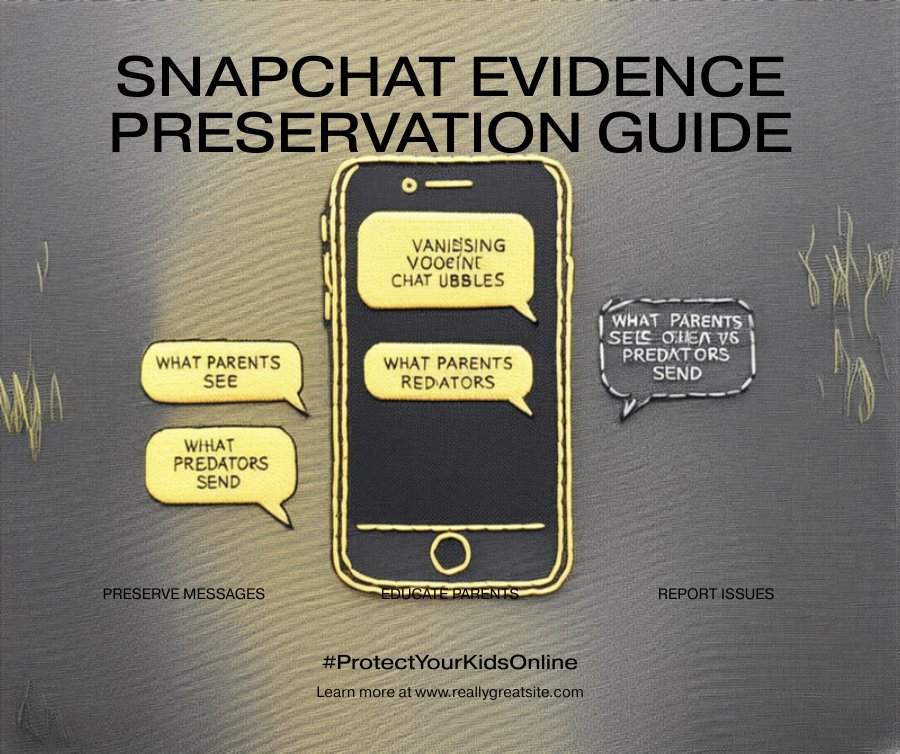

This article explains exactly how the feature works, how predators weaponize it in grooming sequences, and what families need to do — including preserving whatever evidence they can — if their child has been targeted.

How Snapchat's Disappearing Message System Actually Works

Most parents understand that Snapchat messages "disappear." But the mechanics go deeper than that — and each layer serves predators in a different way.

Snaps (Photos & Videos)

Disappear 1–10 seconds after viewing. Replay is allowed once, then gone. The sender is notified if a screenshot is taken — but many workarounds exist (secondary camera, airplane mode, etc.).

Chat Messages

Default setting: delete after viewing or after 24 hours. Users can change this to "delete after 24 hours" or "never" — but the default favors disappearance, and many users (especially children) never change it.

Server-Side Deletion

Snap's servers delete unopened Snaps after 30 days and opened Snaps "shortly after" being opened. Chat messages are deleted from servers after all parties have viewed them. This means no server-side backup exists after the fact.

Memories (Optional Save)

Snapchat allows users to save content to "Memories" — but this is opt-in and controlled by the sender. Predators never save their own abuse content to memories; victims often do not know how to save incoming snaps before they vanish.

Snap Inc. made every one of these features a deliberate design choice. Lawsuits argue that a reasonable platform operator would have implemented automatic evidence retention when accounts involving minors are flagged — the same way email providers retain data for legal holds. Snap chose not to.

The 6-Step Grooming Sequence Predators Use on Snapchat

Child protection researchers and law enforcement have documented a consistent pattern in how predators exploit Snapchat's architecture. Each step is designed to deepen control while minimizing recoverable evidence.

Predators add children using Snapchat's Quick Add feature (which surfaces accounts with mutual friends or contacts) or by finding usernames shared publicly on TikTok, Instagram, or gaming chats. First messages are casual and non-threatening.

Snapchat's "Streak" mechanic rewards daily communication with a fire emoji and streak counter. Predators use this gamification deliberately — children become emotionally invested in maintaining streaks, making them reluctant to stop communicating even when uncomfortable.

Predators invite children to a "private story" — a hidden Story only visible to selected friends — creating a sense of secrecy and exclusivity. Children are told to keep this private from parents, framing it as a special bond.

The predator begins sending mildly inappropriate content as Snaps — knowing they will disappear within seconds. This tests the child's reaction while leaving no permanent record. If the child doesn't report, the predator escalates. If they do screenshot, Snapchat notifies the sender — who then blocks and moves on.

Once trust is established, the predator requests explicit images ("they disappear, no one will ever see them") or attempts to arrange an in-person meeting. The disappearing nature is used as a false assurance of safety for the child.

If the child sends an image, the predator screenshots it (using the secondary-camera workaround) and begins threatening to share it unless the child sends more or complies with demands. The child believes the image "disappeared" — they don't realize the predator saved it.

Why Snap's Design Choices Enable Every Step

Each feature in the grooming sequence maps directly to a Snapchat design decision. Legal experts argue these are not unforeseen consequences — they are predictable outcomes of deliberate product choices.

| Snapchat Feature | Predator Use | Safer Alternative Snap Could Implement |

|---|---|---|

| Disappearing messages (default) | Conduct abuse without permanent evidence | Retain metadata; flag cross-age-gap conversations |

| Quick Add / friend suggestions | Surface minor accounts to unknown adults | Disable Quick Add for under-18 accounts |

| Streak mechanic | Create emotional dependency and daily contact | Disable streaks with unverified adult accounts |

| No age verification | Adults pose as peers; no verification barrier | ID-based age verification at account creation |

| Screenshot notification loophole | Abuse images captured without child knowing | Block image transmission to unverified accounts |

Snapchat implemented the Family Center in 2022 — but critics note it is opt-in, requires the child to accept the parent's request, and does not show message content. It addresses optics, not the core design problems listed above.

Evidence Preservation: What to Do in the First 48 Hours

Because Snapchat's architecture destroys evidence automatically, the window for preservation is narrow. The actions you take in the first 48 hours can determine whether a legal case is viable.

What the Law Says About Snap's Liability

Civil lawsuits against Snap Inc. have multiplied since 2023. Here is what legal experts argue and what courts have allowed to proceed:

Snap's platform is a defective product — designed in a way that is unreasonably dangerous to minors. The disappearing message feature and lack of age verification are specific design defects alleged in multiple complaints.

Snap knew or should have known that its platform was being used to exploit children. Despite this knowledge, it failed to implement reasonable safeguards. This is the core of negligence claims filed in California, New York, and other states.

The Children's Online Privacy Protection Act requires platforms to obtain parental consent before collecting data from users under 13. The FTC has found evidence that Snapchat knowingly allowed under-13 users — violating COPPA requirements.

Several states have enacted or are enacting platform liability laws for CSAM facilitation. These bypass Section 230 immunity by targeting the platform's role in production and distribution, not just hosting.

Section 230 of the Communications Decency Act has historically shielded platforms from liability for third-party content. However, courts have increasingly allowed claims targeting platform design decisions — rather than specific user-generated content — to proceed past motions to dismiss.

Related Snapchat Safety Guides

Frequently Asked Questions

Why do predators specifically choose Snapchat over other platforms? expand_more

Can deleted or disappeared Snapchat messages be recovered? expand_more

What should I do if my child received inappropriate content on Snapchat? expand_more

Is Snap Inc. being held legally responsible for child exploitation on the platform? expand_more

How is Snapchat's disappearing content different from simply deleting messages? expand_more

Was Your Child Targeted on Snapchat?

Evidence disappears fast — but legal options don't. Connect with a Snapchat abuse attorney today. No fees unless you win. Consultation is completely free and confidential.

gavel Get a Free Case EvaluationNo cost. No obligation. Available 24/7.